How Citation-Backed Conversational AI Improves Public Access and Internal Decision-Making

Contributors

“Numerous citations to studies that do not exist.”

That is what auditors have found when reviewing AI-generated legal and policy summaries.

Government agencies, law firms, and compliance teams cannot rely on answers that sound correct but cannot be verified. Dense statutes and layered regulations demand traceability, not just fluency.

That tension creates risk.

- Internal misinterpretation slows decisions.

- Oversimplified public explanations erode trust.

- AI answers without traceable sources multiply the danger.

The real issue isn’t whether AI can summarize a statute. It’s whether the answer can be defended.

The Trust Problem in Legal AI

Most generative AI systems are built for fluency, not accountability. They produce confident responses that sound correct. But in legal, regulatory, and public sector environments, sounding correct is not enough.

An answer must be verifiable. If a system cannot point to the exact statutory clause, regulatory subsection, or policy paragraph that supports its claim, the output is not decision-grade. It becomes an opinion wrapped in confident language.

- For government agencies, that is unacceptable.

- For law firms and lobby firms, it is liability.

- For legal nonprofits, it undermines advocacy credibility.

Trust is not created by tone. Trust is created by traceability.

What Citation-Backed Conversational AI Actually Means

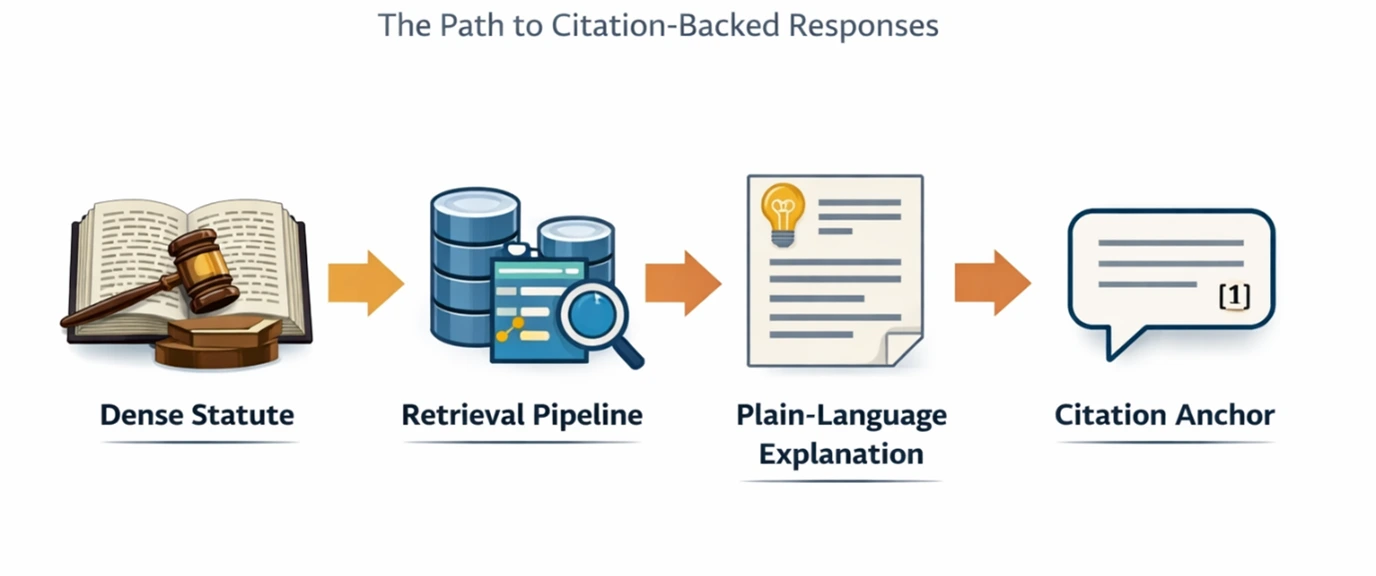

Citation backed AI is not just “adding a link.” It’s an architectural commitment.

A reliable system must:

- Retrieve relevant source passages from structured document stores

- Normalize citations so sections and subsections are indexed correctly

- Use hybrid semantic and keyword retrieval to reduce omission risk

- Present exact source text alongside plain language explanation

- Preserve version history and authority metadata

Without disciplined ingestion pipelines and metadata indexing, citation is cosmetic. With proper governance controls in place, the infrastructure becomes defensible.

This distinction separates experimental AI from institutional AI.

Plain Language Without Legal Drift

Public institutions increasingly prioritize plain language communication. The intent is correct. Legal text is inaccessible to most stakeholders.

But simplification introduces danger. The qualifiers disappear, exceptions are omitted, and time conditions get lost.

Citation-backed AI solves this by layering explanation over source authority.

Instead of:

“The agency must file within 30 days.”

Citation-backed AI provides:

“A report must be filed within 30 days when the X condition applies. This requirement is defined in Section 4(b)(ii) of the Administrative Code.”

The user can immediately verify the source. The explanation becomes transparent rather than interpretive guesswork.

Impact Across Core Audiences

- Government agencies: Faster internal policy analysis, consistent public guidance, and reduced reliance on senior experts.

- Law firms & lobby firms: Accelerated legislative review with source accountability and stronger client advisory workflows.

- Legal nonprofits: Clearer community education and advocacy grounded in traceable interpretation rather than paraphrased summaries.

In each case, the value is not just speed. It reduces interpretive risk.

Beyond Legislation: A Pattern for Any Dense Corpus

The same model applies wherever dense documentation intersects with accountability requirements.

- Compliance manuals

- Procurement policies

- Human resources handbooks

- Standard operating procedures

- Healthcare regulations

- Financial oversight frameworks

Any environment that demands both accessibility and auditability benefits from citation-backed conversational systems.

Citation as Governance, Not a Feature

The most important shift is strategic.

Citation-backed conversational AI is not a productivity enhancement. It is a governance mechanism.

In regulated environments, the question is never simply, “Can AI answer this?” The real question is, “Can we defend this answer if challenged?”

Systems that provide traceable reasoning, version awareness, and explicit source linkage support institutional accountability. Systems that generate untraceable summaries are excluded.

Public trust depends on clarity. Institutional resilience depends on defensibility.

When conversational AI is built on disciplined retrieval and citation governance, it does not replace legal expertise. It augments it with structured transparency.

In environments where accuracy shapes public confidence, conversational AI cannot be opaque. It must be traceable, auditable, and defensible.

Anything less is not transformation. It is risk.

See How This Works in Practice

Most teams know AI creates risk. Few know how to fix it.

Our upcoming webinar breaks down how citation-backed systems work in real legal and policy environments.

Reserve your seat: How Conversational AI is Changing Legal Research and Analysis

Other Popular Articles

In the digital age, businesses must adopt an ad

GRC is the capability, or integrated collection